The AI Use Gap Inside Your Business

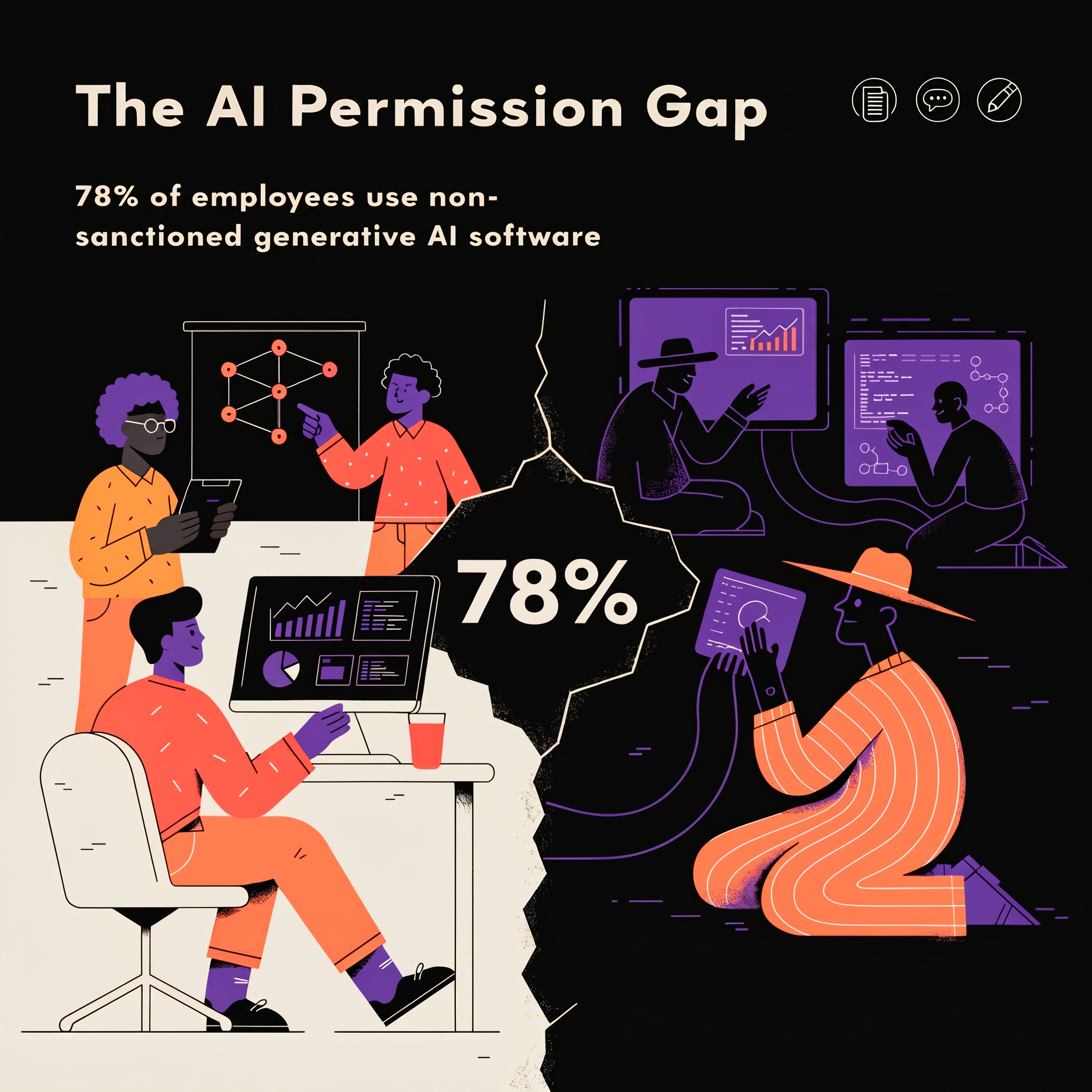

The most dangerous moment in any technology shift isn't when people resist it. It's when they quietly adopt it without anyone noticing.

By the time most businesses write an AI policy, their employees have been using the tools for eighteen months. The AI strategy meeting hasn't happened yet. But the AI adoption already has.

Right now, while you're reading this, someone in your business is using AI. They're drafting an email with it, summarising a report, pulling together a proposal, maybe writing copy for a client. They didn't ask for permission. They didn't raise a hand in a meeting. They just opened a browser tab and got on with it.

That's not a criticism of them. It's a description of reality.

A lot of businesses have made a move. They've bought an enterprise licence for Microsoft Copilot, or rolled out ChatGPT Teams, or given everyone access to Claude. Someone internal — usually a curious, tech-leaning person with the word "champion" attached to their job title — has been quietly evangelising. That's a start. But for most organisations, it's also where the strategy stops.

What sits between "we have access" and "we use this well" is a gap that most leadership teams haven't fully looked at. Not because they don't care. Because it doesn't feel urgent. Things seem to be working. People seem to be getting things done faster. And that's precisely when you should be paying attention.

Without a framework, every employee makes their own decisions. That sounds fine until you think about what those decisions actually cover.

What data are people putting into these tools? A client brief? A commercial contract? A board paper? A salary spreadsheet? Most AI tools, unless you've specifically configured enterprise data protection, are processing that information somewhere. Your people don't know that. Why would they? Nobody told them.

Are confidentiality agreements being respected? Your NDA with a client almost certainly predates the existence of the tools your team is using today. It was written with humans in mind. The question of whether feeding client data into a third-party AI system constitutes a breach is not hypothetical. It's a live compliance question most businesses haven't asked.

What about legal exposure? In the rush to save time and look productive, employees are generating outputs that carry your company's name. AI is confident. It sounds authoritative. It hallucinates. Someone will copy and paste something factually wrong into a client deliverable. Someone already has.

None of this is meant to frighten you away from AI. The opposite. It's meant to show you that the risk isn't in the tool. It's in the absence of governance around it.

This isn't a discipline problem. It's a human one. We are hardwired for shortcuts. Every single one of us. When calculators arrived in the 1970s, teachers were terrified students would stop learning to think. When Steve Jobs handed us the world in our pockets, whole industries tried to ban phones from the workplace. Both times, the technology won. Not because companies endorsed it, but because people found it useful and used it.

AI is the most powerful shortcut most of your employees have ever encountered. For the generation that grew up with Google, it feels entirely natural to reach for it. For older colleagues, it's a revelation. For both, the temptation to just use it, without waiting for a policy document, is completely understandable. You would have done the same thing.

The question isn't whether it's happening. It is. The question is whether it's happening in a way that protects your business and serves your clients.

Think about what it means to have a PA. Not a generic assistant, but someone who knows your business inside out. They know your clients' names, your tone in different contexts, what you never say in a proposal, what your legal team always flags, what your best work sounds like. They've been briefed, trained, embedded. They make you faster without making you sloppy. That is what AI can be for every person in your business. Not a chatbot you reach for occasionally. A working partner, calibrated to how your organisation thinks and operates.

But that doesn't happen with a login and a licence. It requires deliberate decisions. What are the boundaries? What data is in scope and what isn't? What does good output look like for your business, and how do your people know when they're seeing it? Who reviews what? What gets checked before it goes out? These aren't technology questions. They're business questions. And they need business answers.

Organisations that get this right don't just reduce their risk. They get a compounding return. Every person becomes more effective. Decisions get better. Time gets recovered. The quality of client work improves. None of that happens by accident.

The AI use gap is costing you now. In risk you can't see yet. In inconsistency you can't measure. In time saved in ways that may be creating problems downstream. But it's also costing you something more immediate. Opportunity.

The businesses that will look back on this period as transformative won't be the ones that moved fastest. They'll be the ones that moved most deliberately. The ones that asked the right questions before the wrong things happened. The ones that treated AI not as a productivity toy, but as a structural change in how their business operates.

That gap inside your business isn't a technology problem waiting for a technical fix. It's a leadership question that's waiting for you to ask it.